Starting a business takes a lot of commitment and effort, and it's important to understand the process. Our articles will guide you through each new business step, ensuring you're well-prepared for success.

Steps to starting a business

Start your entrepreneurial journey with our step-by-step guide

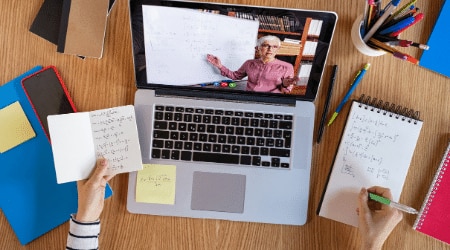

Customer success stories

Customer success stories

Why new businesses choose QuickBooks

Save time with automated accounting

75% of customers say QuickBooks saves them more time than their previous method.*

Visualise your financial performance

76% of customers say QuickBooks gives them useful insights and a complete view of their business.*

Boost efficiency and streamline operations

Customers save 13.3 hours a week compared to their previous method.*

Disclaimer: *Based on September 2022 survey of small businesses using QuickBooks Online in global markets who stated they previously used a spreadsheet to manage their business finances. Global markets exclude AU, BR, CA, FR, IN, MX, UK, and US.